Train

train collectors and annotators

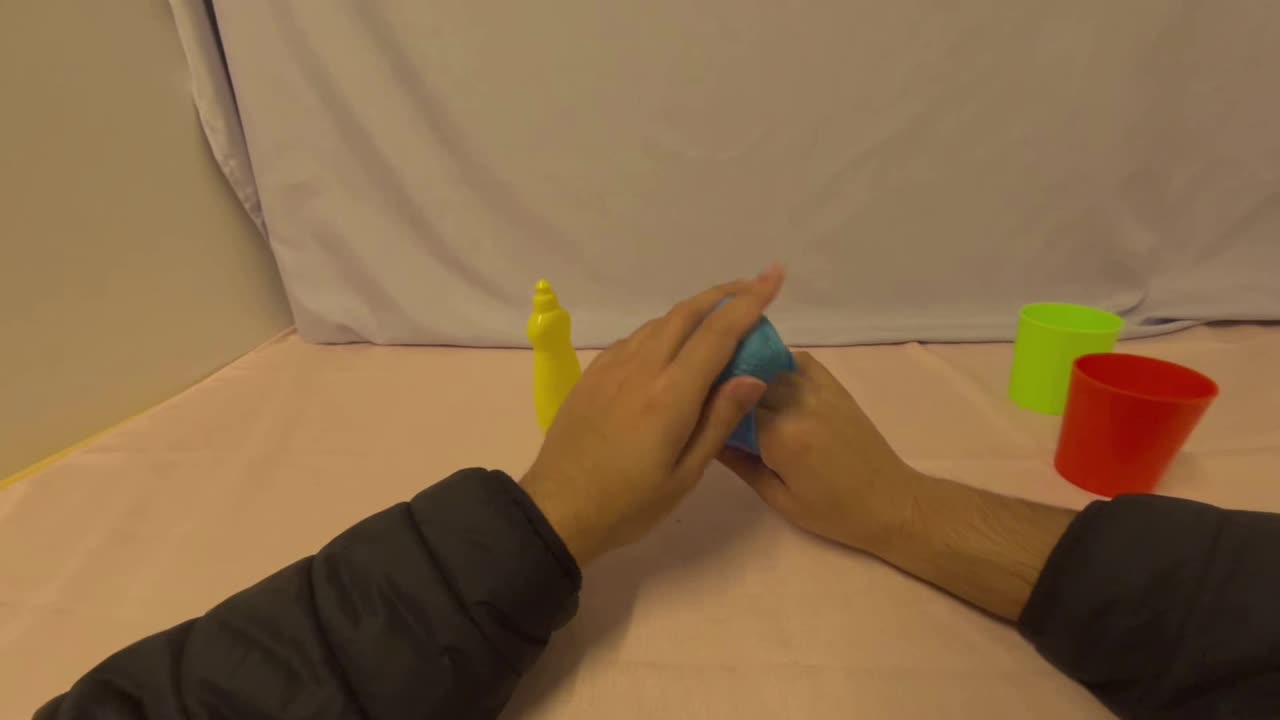

Robots need data quality and diversity before policy training. EgoArena turns raw egocentric video into training signal: bad clips filtered, coverage measured, reviewers tested, consensus weighted, and policies trained on the result.

84%

84% 0.91

0.91 QA

QADiversitymeasured

Frame continuityreal RLE at 30 fps

Curate verdictkeep high-signal window

Our customers have sold datasets made with us into

Keep your data from being a commodity by using our data quality & diversity platform.

train collectors and annotators

test workers continuously

certify top performers

detect worker vs team drift

relabel or repair bad labels and broken episodes

attach quality, safety, task, embodiment, contact, and dynamics metadata to raw data

score world-model readiness across synchronization, action-observation alignment, contact richness, object motion, latency, embodiment, and task success

curate silver datasets for your budget

train VLMs, robotics models, and forward dynamics models to label and score larger bronze datasets

benchmark which data mixes improve downstream robot policy performance

evaluate counterfactual rollouts through simulators, learned world models, or customer internal evaluators

recommend whether data should be kept, upweighted, downweighted, relabeled, recollected, or used for world-model calibration

Industry standard measures surface-level cleanliness

Generic QA catches bad labels and doesn't say what labels to update

Most companies accept or reject data, then label with basic VLMs

Submit raw egocentric data. EgoArena turns noisy clips into ranked, reviewable signal.